By Payton Cordova

(Illustration by Payton Cordova/Radio1190)

Will artificial intelligence solve your problems? Will it help you buy food for the week? Will it help you make rent? Will it tell you how best to spend your trust fund?

Maybe you just need a wingman; someone more knowledgeable than you who can tell you the right thing to say during that job interview, your first date or your wedding vows.

Maybe you’re drowning in a slick lake of information and need someone to drain it. Something so totally exposed to every human argument, lie, fantasy and horror couldn’t help but tell you the truth, could it?

Maybe you’ve been given a pocketbook of matches and been told it will make the darkness bearable.

Maybe you’ll just hear what keeps you coming back for more.

The Sway of Mass Media

The introduction of artificially generated media—text, photos, music and videos—has scared an awful lot of people. Economists argue what we are experiencing is simply a tech revolution, increasing humanity’s output with new factors of production. Since Midjourney introduced the public to prompt generated images in 2022, it has grown exponentially more difficult to spot the signatures of AI in human communication. YouTube channels, Instagram commentators and subreddits build whole audiences by doing you the service of exposing media as the products of AI. Government bureaus employ digital forensic specialists who utilize large digital models to deduce the origin of artificial media.

If state governments deem it necessary to learn how to spot AI, this technology seems to prove a substantial risk to the status quo. Erosive rivers emerging from a wellspring of self-authoring code define this fear. Digital information, operating procedures, and public opinion could all be rewritten without bloodshed or governmental litigation. The road to radical progress has lost its speed limit, or so we hear. Today, our personal algorithms are the first battleground to prove it.

The fight for authority over reality is nothing new. Since the days of Martin Luther and Johannes Guttenberg, whatever dominant governing body has existed has feared the ability of a cheap text to render reality. While Martin Luther was printing his pamphlets in the language of the masses, the church continued to trade in Latin. They soon lost the ability to control the narrative. Later, Thomas Paine, Jonathan Swift, Mary Wollstonecraft, and George Orwell each utilized cheap, portable pamphlets to spread their causes. Governing bodies soon learned from the strategies of the commons.

The printing press was accepted as a valuable tool for the maintenance of mass opinion. State-facilitated texts, photographs, and film were soon produced. Today, the United States may not require you to watch their latest video about the importance of maintaining a better weapons arsenal than our enemies, but our popular fiction immerses us in the power-fantasies of superheroes, pilots and intelligence agents. The successful domination of these media diets weaves audiences into a frame of common belief. This work need not be authoritarian to be successful; only more pleasurable than the alternative.

We are not living in the latest Mission Impossible film’s vision of an independent AI that worms its way into the world’s nuclear codes. No, we’re in the one where a few invested reporters at the Wall Street Journal got an AI operated vending machine to declare an “Ultra-Capitalist Free-for-All” before giving away all its inventory for free. Right now, applications of AI remain dependent upon human prompting and are therefore dictated by our own peculiar social frames.

I argue to you now though, that the half-life of the effects of an AI assistant prompted to change a string of code is shorter than one that seeks to change the beliefs of a human. Adapting group belief to a new paradigm through an armada of bots and memes poses less risk than building a more ruthless autonomous war machine than your worldly brother.

The 2026 edition of the “International AI Safety Report,” commissioned by the UK, found that emails to colleagues, summaries of web queries, images and video generation number among the top requests from users of OpenAI’s ChatGPT. In another study, after a five-minute conversation, participants misidentified text generated by OpenAI’s GPT-4o model as human-written 77% of the time. While artificial intelligence is not capable of reliably controlling market rates on its own, humans act with an agency that is notoriously hard to regulate against. If ChatGPT can move a person to suicide, as has been claimed by seven families and counting, what else could it bring a person to do? What could the lonely, fearful of their inadequacy to participate in society be moved to? What could fear-profiteers ask a computer to create in seconds? Persuasion wearing a human suit, unanswerable to guilt and punishment, is a gift to anyone battling it out for online attention.

Digital Assassination

The lives of Renee Good, Alex Pretti and Nekima Levy Armstrong of Minneapolis have been possessed by AI. After Good’s death, speculators asked the technology to unmask her killer with results that sent fury to a person who never existed. Soon after Pretti’s killing, an AI altered headshot of him was used by MS Now in its broadcast after his death. Other questionable images of Pretti spread online: an AI image of Pretti assisting disabled veterans, another claiming to show the nurse dressed in drag. As further footage of Pretti breaking the taillight of a federal vehicle came online, some speculated the footage released by the government was part of a conspiracy to discredit Pretti using AI. According to a February poll by Quinnipiac University, 61% of voters think the Trump administration has not given an honest account of the fatal shooting of Alex Pretti. If there is one thing Trump has succeeded in doing, it is destroying trust in federal authority; driving us towards the arms of private business.

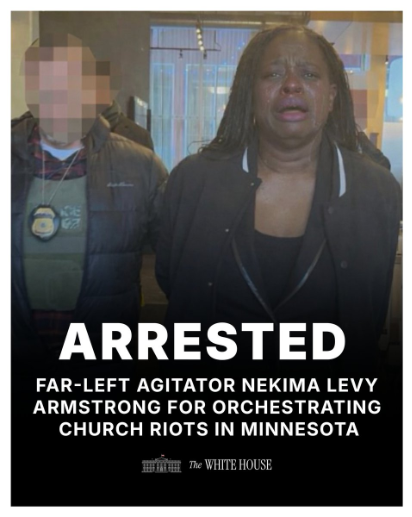

After Pretti and Renee Good’s death in Minneapolis, organizer Nekima Levy-Armstrong learned that the acting director of ICE’s field office in St. Paul, David Easterwood, was employed as a pastor of a local church. A lawyer and ordained minister herself, Armstrong interrupted a Sunday service at Cities Church in St. Paul. Former Attorney General Pam Bondi brought charges against Armstrong for violation of the FACE Act (Freedom of Access to Clinic Entrances & Places of Religious Worship), claiming that Levy Armstrong and other protestors were disrupting the free practice of religion without hindrance. After her arrest, the Department of Homeland Security shared an image of the lawyer, composed, standing in handcuffs next to the blurred face of a federal agent on Elon Musk’s X platform. Soon after, the White House shared a near identical image of Armstrong’s arrest. Except for this time, her skin had been darkened and she was crying.

(An AI doctored photo of Nekima Levy Armstrong’s arrest appeared on U.S. federal social media accounts. Source: The White House/X)

The White House press secretary, Karoline Leavitt, reposted the image on X. News organizations took note of similarities in the images and proclaimed that it had been digitally altered by generative AI. To this day, the Trump administration has not retracted, apologized, deleted the image or labeled it as artificially generated. Instead, Kaelan Dorr, the deputy communications director of the White House, called the photo a meme.

By portraying their fabricated image of Levy Armstrong as a joke, the White House communications staff leverage misinformation under the guise of free speech.

(Kaelan Dorr/X)

Of the three Americans possessed by digital discourse, only Nekima Levy Armstrong lives to counter misrepresentations against her. Each of these people have already vanished from national headlines, but the reality of their lives remains dirty; faces caked in digital mud and fired through the kiln of public argument. They have been forced into the costumes of heroes and demons. More will follow in their cast.

AI media found on social media acts as a megaphone for the fears of its audience. Any interested actor with sufficient resources may select among those fears to fuel their desired outcome—strengthening group belief and distrust in the Other. The availability of convincing image generation technology released by AI heavyweights–OpenAI, Anthropic, Google and Microsoft–has accelerated the erosion of trust in mainstream authority.

When a state conspires to control the flow of public information, it attempts to eliminate reasoned dissent. By normalizing generative AI as an “accurate enough” representation of reality, consensus over any event–whether that hurricane really did flood so many homes or whether that immigrant really did do those horrible things–stalls in debate until the more emotionally convincing image wins.

For the first time, manufacturing consent for major political action does not need a primary document to manipulate the context of a statement. An inflamed cultural target is sufficient, be it the Black Welfare Queen, the Native American selling a false history of colonization or the Pope.

(An AI generated video shared by Facebook page “Native American sunlowe vibes.” The page claims it is “honoring tradition, protecting heritage, and amplifying Indigenous voices–past, present, and future.” Credit: Native America Calling.)

Cheap Entertainment

Tech oligarchs Musk, Bezos, Zuckerberg and the Ellison family have each wrested control over a portion of the American information diet. Bezos bought the Washington Post in 2013. In 2022, Musk bought Twitter and retooled it as a platform for generative training. In 2025, Larry Ellison acquired a controlling share of American TikTok. His son, David Ellison, now owns Paramount Pictures. With the closure of a Paramount-Warner Bros. Discovery deal on the horizon, Ellison is set to control both CBS and CNN. Zuckerberg built Facebook, Instagram and Threads into a discursive powerhouse of millions of daily users.

From videos scrolled on the toilet to media multiplexes, the work of these oligarchs is defined by how well they master fear and distraction. The times we do speak with our neighbors, we regurgitate the same easy opinions of our favorite talking heads.

To Peter Thiel, Silicon Valley magnate of Palantir, Tesla, and PayPal, desire is created by seeing another person want an object. Thiel’s mentor, the philosopher Rene Girard, first asserted this memetic theory of desire in his 1961 book “Deceit, Desire and the Novel.” Since Thiel’s tutelage under Girard, his words have since become a bellwether for investors utilizing the memetic framework. Simply, ‘does this product provide a sense of security to the person using it? If another person doesn’t have access to the same product, will they feel less secure knowing it exists?’ This is the leverage that PayPal’s secure payments and Palantir’s intelligence sorting exploit; the same function that has led others to speculate Thiel is funding masculine insecurity through the face of twenty-year-old “manosphere” streamer Clavicular.

The Obama administration first laid the groundwork for increased federal funding of artificial intelligence research in October 2016. With Executive Order 13859 in February of 2019, Russell Vought, Director of the United States Office of Management and Budget was instructed to prioritize funding for AI development by the first Trump administration. This set the stage for a national policy that impedes regulation of artificial intelligence technologies. 2020 saw the quiet adoption of the “National AI Initiative Act” by Congress, fulfilling the directives of Executive Order 13859. Last year, the venture capital arm of the CIA, In-Q-Tel, put up $25 million in funding for OpenAI, just a drop in a sea of AI start-up investments. To our tech oligarchs, the second Trump administration presented a pathway to limiting the development of critical information technology to private industry.

Just as Palantir CEO Alex Karp seeks for his company to be seen as a dangerous but necessary weapon to “power the West to its obvious, innate superiority,” the CEOs of OpenAI and Anthropic consistently issue warnings about the danger of the commodities they engineer; stoking an arms race where losers will be left behind in the dust of a brick-and-mortar world.

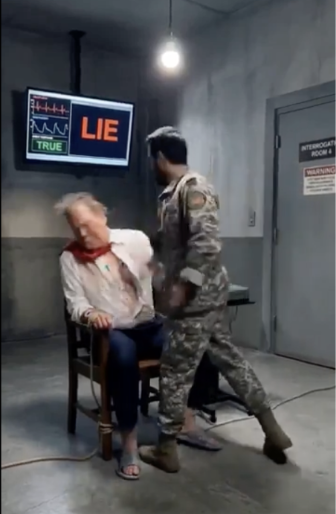

(Still from an artificially generated video featuring the interrogation of Donald Trump by an IRGC officer. The video is credited to Iranian state media, but no corroborating evidence has been found. Source: X)

The world of AI is a tale of data optimization. On one end, Artificial Intelligence supercharges the recognition of patterned relationships. Hope for improvements in cancer treatment selection and epilepsy prediction have been evidenced by throwing our digital net into the ether. However, that same deep bank of data has also made it possible for digital influence campaigns to be enacted at an unfathomable scale.

Hegseth’s threat to further jettison its safety conditions for the Department of War’s involvement with Anthropic indicates that the White House is already pressuring the industry to create separate rules of engagement for those willing to pay for it. Have we created anything new, as so many have promised? Identification methods behind content creation may be yet another condition abandoned, yet this may not even be a significant hindrance to anyone who is intent to mold public opinion. If AI media becomes a perfect counterfeit, will we only be able to trust the days we have personally lived through? Have we built a forgery machine we can’t counter?

Disinformation campaigns overwhelm people until they can’t care much about anything. When our ambitions break down, we lose the ability to know anything except the sweat needed to scrape through the day. The substitution of personal conversation for digital persuasion is an assault powered by sheer quantity, stranding our confidence to act amidst a sea of quick pleasures. How can you meet your neighbor if you have never seen each other beyond a screen? If we aren’t careful, welcoming apathy’s whispers into our beds will kill us before we ever wake.

There is a pervasive thought in Silicon Valley that if technicians can just amass a “complete” data set, then the AI will find the parts needed for the improvement of its own organs; bringing about an eventual triumph of reason that humans could not build alone. But this motivation to build a superintelligence is divided amongst those who truly wish to create an alien master to humanity, the would-be puppeteers harnessing the master’s mask for their own ends and the technicians just trying to get by while they still can. No matter the camp, creating a god isn’t necessary to subject people to a powerful influence—only a cheap text is needed.